Most people who invest in a CRO program have the same mental model of how returns are generated: run tests, find winners, watch revenue go up. A/B testing is the engine, and everything else is noise.

Their expectation of CRO ROI looks like this.

.png)

That framing isn't entirely wrong, but it's dangerously incomplete — and it's the reason so many brands feel like their CRO investment isn't "adding up."

At Enavi, we recently hosted an event with our friends at Convert called the Shopify CRO Reality Check, and one point kept surfacing throughout: the ROI of CRO is far broader than most brands realize. When you limit your definition of return to test wins alone, you're leaving a significant portion of the program's value unaccounted for, and you're setting yourself up for frustration when the numbers don't match the hype.

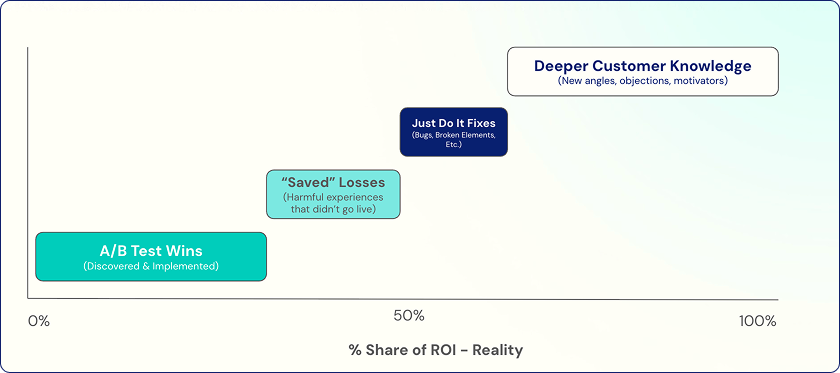

Here's what the full picture actually looks like.

Let’s dive into these one by one.

1. A/B Test Wins (Discovered & Implemented)

This is the obvious one, and yes, it matters. Finding experiences that outperform the control and shipping them to production is the most immediately quantifiable output of any CRO program. It's what stakeholders think about when they approve the budget, and it's what most reporting focuses on.

The key word there is "implemented." A winning test that sits in a queue for weeks while dev resources are tied up is a deferred return at best and a forgotten one at worst. Tracking time-to-implementation — and targeting a two-week window — is one of the most important operational habits a CRO program can build.

What's worth remembering is that test wins, while real and valuable, only represent a fraction of the total ROI picture. Treating them as the whole story leads to inflated projections and eroded trust when reality doesn't match.

2. "Saved" Losses

Once a brand reaches a certain scale, every bad idea that makes it to production is a potential six-figure unforced error. That's not hyperbole, that’s just the math simple math of lower Revenue Per Visitor multiplied over millions of sessions. If a harmful experience ships revenue impacts compounds fast, and no one even notices until much later on.

This is where a rigorous testing culture earns its keep in ways that never appear in a dashboard. When a test reveals that a proposed change hurts performance, that result is valuable. You've just avoided a costly mistake. The fact that this kind of protection is difficult to put a precise dollar figure on doesn't make it any less real.

A mature CRO program should be tracking "saved losses" as its own category, not treating losses as non-events. The absence of damage is a form of return.

3. Just Do It Fixes

Shopify stores are living, evolving things. Apps get added and updated, back-end functionality shifts, product catalogs grow more complex. All of that creates a steady accumulation of bugs, broken elements, and degraded experiences, things lurking beneath the surface that often affect double-digit percentages of users without anyone realizing it.

A thorough QA smoke test surfaces these issues. And for brands doing $50M or more, fixing what's already broken can pay for the first several months of a CRO engagement on its own. There's no testing required, no statistical significance to wait for, just straightforward improvements that remove friction you didn't know existed.

These aren't glamorous wins, but they're immediate and real.

4. Deeper Customer Knowledge

This is the one most brands undervalue, and arguably the one with the longest tail of impact.

Good CRO research — Voice of Customer surveys, post-purchase data, session analysis, review mining — generates insights about what motivates customers, what causes anxiety, and what language actually resonates with them. When that knowledge stays siloed on the website, you're only capturing a fraction of its value.

The Carnivore Snax case from our webinar makes this concrete. By analyzing first-party reviews and post-purchase responses, the team identified "addictive" as the word customers used most to describe the product. Incorporating that exact language into the homepage headline drove an 8.14% increase in average revenue per user. But the insight didn't stop at the website — it became a creative angle for ads, email, and SMS as well.

That's the real multiplier effect of research-led CRO. The on-site test is just the first deployment of a validated insight. The same learning can ripple across paid social creative, email subject lines, video scripts, and more.

The Honest Breakdown

When you map out where CRO ROI actually comes from versus where people expect it to come from, the gap is striking. Most brands expect test wins to account for nearly all of the return. In practice, test wins are a minority contribution (somewhere from 25% to 40% of total impact) while saved losses, bug fixes, and customer knowledge collectively drive the majority.

That's not a knock on testing. It's a recalibration of what a CRO program actually is: a systematic process for understanding customers deeply and translating that understanding into better experiences everywhere, not just in the variants of an experiment.

A well-run program drives all four types of return natively. If you're only measuring the first one, you're not just undervaluing what you have, you're also making worse decisions about where to invest next.

Want to go deeper on this? Check out the full Shopify CRO Reality Check webinar, or explore our free Shopify CRO Upgrade Kit at go.enavi.co/upgrade-kit.